Agent-SPM, AI-SPM, AI Usage Controls… What Do They Actually Secure?

Most security teams start with AI usage controls. Then coding agents start shipping faster than any policy can track. Here's why Agent-SPM is the layer that actually keeps up.

.png)

Almost every security conversation we’re having right now starts the same way.

A CISO or security architect says: "We just want to see what's running." They're not asking for a complicated answer. They want visibility, a list of what AI tools employees are using, what agents are deployed, what MCP is connected to what. It sounds straightforward.

Then reality catches up.

Because by the time they've started mapping what's running, the engineering team has already shipped a dozen new AI agents built with coding agents and assistants like Claude Code or ChatGPT Codex. The catalog is already stale. And the policies that were put in place last quarter, the ones designed to control which AI tools employees can use, aren't touching any of this. The agents aren't employees. They don't follow acceptable-use policies. They just run.

That gap, such as using traditional controls to get visibility on AI and what's actually happening in an enterprise running AI agents at scale, is exactly what this post is about.

The Velocity Problem: When Agents Outpace Restrictions

To understand why security controls keep falling behind, look at what coding agents are doing to development velocity.

Uber burned through its entire 2026 AI budget in four months. The culprit was Claude Code and similar coding agents that engineers adopted faster than finance could track. Monthly AI API costs per engineer hit $500 to $2,000 and kept climbing. Around 11% of Uber's live backend code is now being written entirely by AI agents.

This trend has a name: tokenmaxxing, that is, organizations and engineers maximizing AI consumption, treating token spend as a signal of productivity. Meta's internal "Claudeonomics" leaderboard was ranking employees by token usage and handing out titles like "Token Legend." Code churn, that is lines deleted versus lines added, jumped 861% under high AI adoption. Activity is everywhere but there is no oversight.

This blog isn’t about budget risk. The security implication is that coding agents are autonomously building agentic applications, deploying agents, wiring up MCP servers, connecting to enterprise systems, at a pace that makes traditional governance look like trying to enforce a speed limit by reading license plates after the cars have passed.

This is why the security conversation that starts with "we just want visibility" quickly becomes something more urgent. Visibility alone doesn't help when agents are being deployed faster than anyone is tracking them.

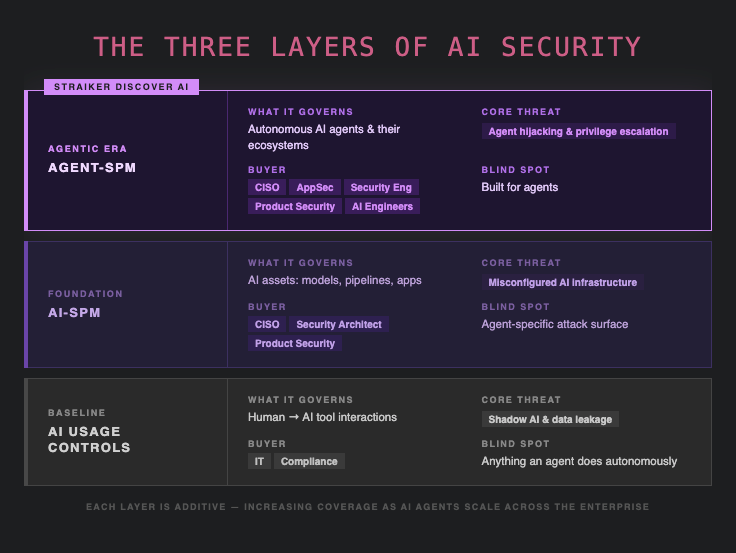

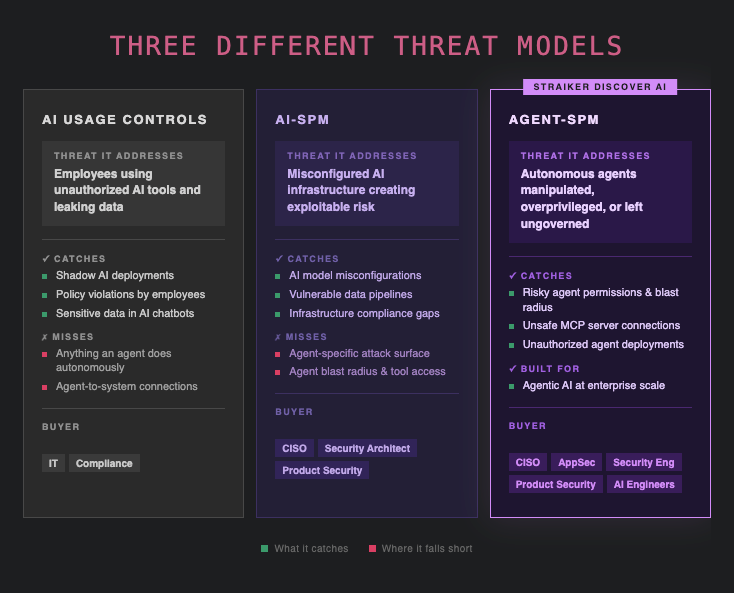

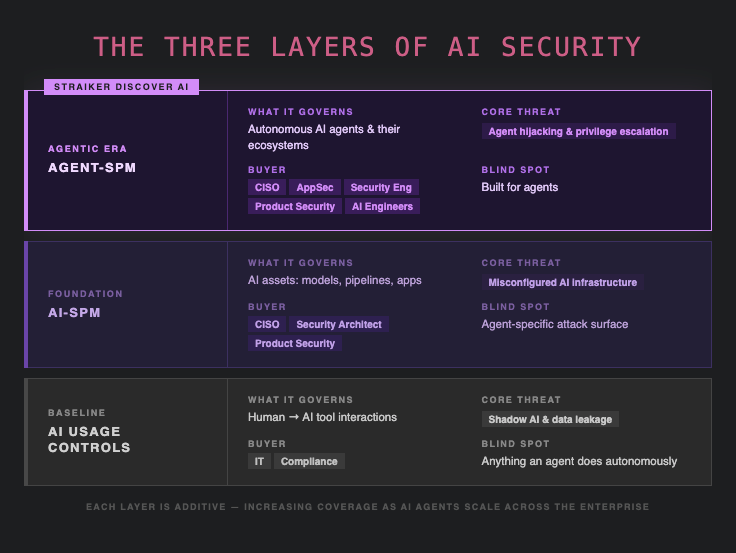

What AI Usage Controls Actually Do (And Where They Stop)

AI usage controls address a real problem, but they were built for a specific scenario: employees using AI tools that security and IT haven't approved. Someone uploads a confidential contract to an AI summarizer. A developer pastes API credentials into a chatbot. A team adopts a SaaS AI product without going through procurement. This is the shadow AI problem, that is, unauthorized AI usage by humans, through human-facing interfaces.

AI usage controls handle this well. They can identify shadow AI deployments across the organization, enforce policies on which tools employees are allowed to use, and block sensitive data from leaving through unsanctioned AI services. For organizations still in early stages of AI adoption, they provide a meaningful first layer of AI governance.

The threat model here is: a human takes an action through an AI tool that violates policy. The attacker vector is the employee. The data risk is what gets typed into a chatbot.

Where AI usage controls stop: They watch for humans using AI. They are not built for AI acting autonomously.

An AI agent doesn't go through a browser extension. It doesn't trigger a DLP rule when it calls an API. It doesn't appear on a shadow AI dashboard because it isn't a SaaS tool an employee chose, it's infrastructure that someone deployed. By the time a coding agent has built and shipped another agent that's now connected to your CRM and customer data, no usage control policy saw it happen.

AI usage controls are necessary but not sufficient. Calling them an AI security strategy for an enterprise running autonomous agents is like saying you have network security because you've blocked personal email.

What AI-SPM Is, and Why It Gets You Further

AI Security Posture Management (AI-SPM) represents the next step in maturity. Where usage controls focus on human behavior, AI-SPM focuses on AI assets, the models, pipelines, applications, and infrastructure that make up an organization's AI environment.

An AI-SPM solution continuously discovers AI assets across your environment, assesses their security configuration, and surfaces misconfigurations, vulnerabilities, and compliance gaps. It tells you what AI infrastructure you have, how it's configured, and what's exposed.

The threat model here is: AI infrastructure is misconfigured, vulnerable, or ungoverned, and that creates exploitable risk. The risk is in your AI systems themselves, not just in how employees are using them.

AI-SPM was the right answer for the first generation of enterprise AI. These are organizations who were the first to deploy AI models, build LLM-powered applications, and wire up data pipelines. It gives security teams the posture visibility they need to catch misconfigurations before they become incidents.

Where AI-SPM falls short: AI-SPM was largely designed before autonomous agents became the dominant deployment pattern. An AI model has a configuration. An AI agent has a behavior, that is, it makes decisions, takes actions, calls tools, chains tasks together, and can reach parts of your environment that nothing in your AI-SPM rulebook anticipated.

Discovering that a model is deployed tells you it exists. Discovering that an agent has write access to your production database, is connected to an MCP server pulling from an unvetted external source, and has permissions that would let it exfiltrate data across three enterprise systems, that's a different kind of visibility, requiring a different kind of posture assessment.

AI-SPM covers AI assets. It doesn't cover the full blast radius of an AI agent.

What Agent-SPM Is, and Why It's Different

Agent-SPM (also called Agentic Security Posture Management, or Agentic SPM) is purpose-built for the reality of autonomous AI agents operating in production environments.

The core difference is the threat model.

An AI agent is not a passive asset because they take actions, call tools, and connect to MCP servers that extend its capabilities into your infrastructure. It can chain decisions across multiple systems without a human in the loop. And critically, it can be manipulated. Direct or indirect prompt injection, tool poisoning, privilege escalation through agent chains, and excessive permission grants are all attack vectors that don't appear in an AI-SPM framework designed to assess static AI infrastructure.

Agent-SPM continuously:

- Discovers every AI agent operating across your environment, not just the ones that went through a formal deployment process

- Maps the full agentic ecosystem: MCP server connections, tool integrations, agent-to-agent relationships, and the data that each agent can reach

- Assesses security posture at the agent level, covering permissions, configurations, blast radius, and integration risk

- Identifies misconfigurations, overprivileged agents, unsafe MCP connections, and unauthorized agent deployments before attackers find them first

The buyer is the same as AI-SPM, which are typically product security, security architects, CISOs, and AppSec teams. But the question being answered is different. AI-SPM asks: what AI assets do we have and how are they configured? Agent-SPM asks: what can our agents actually do, and what happens if one of them is compromised?

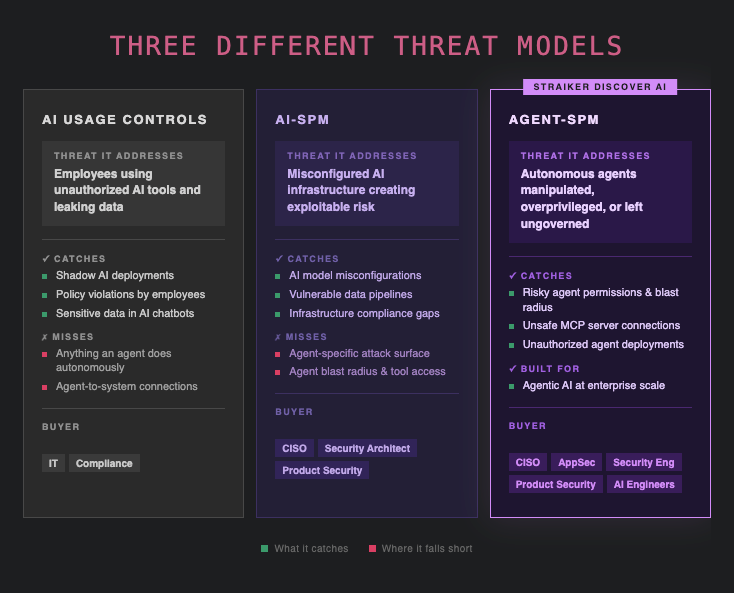

The Threat Model Comparison

The Conversation Is Shifting Because the Problem Has

Security teams that started 2025 asking "how do we control which AI tools employees use?" are asking very different questions in 2026.

The coding agents that are shipping production code, the agentic workflows that are connecting to customer data, the MCP server that one team wired up last week without telling security, none of this is visible to AI usage controls. Only some of it shows up in a standard AI-SPM posture assessment. And all of it carries real, exploitable risk.

The security teams that are getting ahead of this aren't replacing their existing controls. AI usage controls still have a role. AI-SPM still has a role. But they're adding Agent-SPM as the layer that's actually designed for how agents behave, not just how they're configured.

The question isn't whether your enterprise is running AI agents. At the pace that coding agents are shipping, you almost certainly are. The question is whether you can see them, assess their risk, and catch misconfigurations before someone else does.

Straiker Discover AI is Straiker's Agent-SPM product, built to give security teams the visibility and posture management that's purpose-built for autonomous agents. It discovers every agent in your environment, maps MCP server connections and tool integrations, assesses agent security posture, and surfaces the misconfigurations and excessive permissions that create exploitable risk.

Frequently Asked Questions

What is Agent-SPM? Agent-SPM (Agentic Security Posture Management) is the practice of continuously discovering, assessing, and governing autonomous AI agents across an enterprise environment. Unlike AI-SPM, which focuses on AI assets broadly, Agent-SPM is purpose-built for the threat model of autonomous agents, covering agent permissions, MCP server connections, tool integrations, and the blast radius of each agent if compromised.

What is AI-SPM? AI Security Posture Management (AI-SPM) is the discipline of discovering, assessing, and governing AI assets, including models, data pipelines, AI applications, and infrastructure, to identify misconfigurations, vulnerabilities, and compliance gaps. It is a broader category than Agent-SPM, designed for organizations that are managing AI infrastructure across their environment.

What is the difference between AI-SPM and Agent-SPM? AI-SPM covers AI assets broadly and focuses on infrastructure configuration and compliance. Agent-SPM focuses specifically on autonomous AI agents and their unique threat model: the actions they take, the tools they access, the MCP servers they connect to, and the permissions they hold. Agent-SPM is the right framework when agents, not just AI models, are the primary security concern.

What are AI usage controls? AI usage controls govern how employees interact with AI tools, identifying shadow AI (unsanctioned AI tools in use), enforcing acceptable-use policies, and preventing sensitive data from being shared through unauthorized AI services. They address the threat of humans misusing AI, but are not designed to govern what autonomous AI agents do independently.

What is shadow AI? Shadow AI refers to AI tools and services that employees adopt without formal IT or security approval. This creates data leakage risk, compliance exposure, and visibility gaps. AI usage control solutions are designed to detect and manage shadow AI across the enterprise.

Why aren't AI usage controls enough for agentic AI? AI usage controls watch for human-initiated AI activity. Autonomous agents operate independently, they don't go through the same access paths, don't appear on shadow AI dashboards, and don't trigger usage policies. An agent that was deployed by a coding assistant can be fully active, connected to enterprise systems, and completely invisible to a usage control layer.

What is tokenmaxxing and why does it matter for security? Tokenmaxxing is the practice of maximizing AI token consumption, with organizations treating high token spend as a proxy for productivity. The trend matters for security because it signals that AI agents, especially coding agents, are being deployed and used at volumes that far outpace any governance process. Uber burned through its entire 2026 AI budget in four months due to coding agent adoption. That velocity of deployment is exactly why Agent-SPM has become urgent.

Straiker is The Agentic Security Company. Straiker Discover AI, Ascend AI, and Defend AI secure agentic applications across their full lifecycle, from visibility and posture through continuous adversarial testing to runtime protection.

Almost every security conversation we’re having right now starts the same way.

A CISO or security architect says: "We just want to see what's running." They're not asking for a complicated answer. They want visibility, a list of what AI tools employees are using, what agents are deployed, what MCP is connected to what. It sounds straightforward.

Then reality catches up.

Because by the time they've started mapping what's running, the engineering team has already shipped a dozen new AI agents built with coding agents and assistants like Claude Code or ChatGPT Codex. The catalog is already stale. And the policies that were put in place last quarter, the ones designed to control which AI tools employees can use, aren't touching any of this. The agents aren't employees. They don't follow acceptable-use policies. They just run.

That gap, such as using traditional controls to get visibility on AI and what's actually happening in an enterprise running AI agents at scale, is exactly what this post is about.

The Velocity Problem: When Agents Outpace Restrictions

To understand why security controls keep falling behind, look at what coding agents are doing to development velocity.

Uber burned through its entire 2026 AI budget in four months. The culprit was Claude Code and similar coding agents that engineers adopted faster than finance could track. Monthly AI API costs per engineer hit $500 to $2,000 and kept climbing. Around 11% of Uber's live backend code is now being written entirely by AI agents.

This trend has a name: tokenmaxxing, that is, organizations and engineers maximizing AI consumption, treating token spend as a signal of productivity. Meta's internal "Claudeonomics" leaderboard was ranking employees by token usage and handing out titles like "Token Legend." Code churn, that is lines deleted versus lines added, jumped 861% under high AI adoption. Activity is everywhere but there is no oversight.

This blog isn’t about budget risk. The security implication is that coding agents are autonomously building agentic applications, deploying agents, wiring up MCP servers, connecting to enterprise systems, at a pace that makes traditional governance look like trying to enforce a speed limit by reading license plates after the cars have passed.

This is why the security conversation that starts with "we just want visibility" quickly becomes something more urgent. Visibility alone doesn't help when agents are being deployed faster than anyone is tracking them.

What AI Usage Controls Actually Do (And Where They Stop)

AI usage controls address a real problem, but they were built for a specific scenario: employees using AI tools that security and IT haven't approved. Someone uploads a confidential contract to an AI summarizer. A developer pastes API credentials into a chatbot. A team adopts a SaaS AI product without going through procurement. This is the shadow AI problem, that is, unauthorized AI usage by humans, through human-facing interfaces.

AI usage controls handle this well. They can identify shadow AI deployments across the organization, enforce policies on which tools employees are allowed to use, and block sensitive data from leaving through unsanctioned AI services. For organizations still in early stages of AI adoption, they provide a meaningful first layer of AI governance.

The threat model here is: a human takes an action through an AI tool that violates policy. The attacker vector is the employee. The data risk is what gets typed into a chatbot.

Where AI usage controls stop: They watch for humans using AI. They are not built for AI acting autonomously.

An AI agent doesn't go through a browser extension. It doesn't trigger a DLP rule when it calls an API. It doesn't appear on a shadow AI dashboard because it isn't a SaaS tool an employee chose, it's infrastructure that someone deployed. By the time a coding agent has built and shipped another agent that's now connected to your CRM and customer data, no usage control policy saw it happen.

AI usage controls are necessary but not sufficient. Calling them an AI security strategy for an enterprise running autonomous agents is like saying you have network security because you've blocked personal email.

What AI-SPM Is, and Why It Gets You Further

AI Security Posture Management (AI-SPM) represents the next step in maturity. Where usage controls focus on human behavior, AI-SPM focuses on AI assets, the models, pipelines, applications, and infrastructure that make up an organization's AI environment.

An AI-SPM solution continuously discovers AI assets across your environment, assesses their security configuration, and surfaces misconfigurations, vulnerabilities, and compliance gaps. It tells you what AI infrastructure you have, how it's configured, and what's exposed.

The threat model here is: AI infrastructure is misconfigured, vulnerable, or ungoverned, and that creates exploitable risk. The risk is in your AI systems themselves, not just in how employees are using them.

AI-SPM was the right answer for the first generation of enterprise AI. These are organizations who were the first to deploy AI models, build LLM-powered applications, and wire up data pipelines. It gives security teams the posture visibility they need to catch misconfigurations before they become incidents.

Where AI-SPM falls short: AI-SPM was largely designed before autonomous agents became the dominant deployment pattern. An AI model has a configuration. An AI agent has a behavior, that is, it makes decisions, takes actions, calls tools, chains tasks together, and can reach parts of your environment that nothing in your AI-SPM rulebook anticipated.

Discovering that a model is deployed tells you it exists. Discovering that an agent has write access to your production database, is connected to an MCP server pulling from an unvetted external source, and has permissions that would let it exfiltrate data across three enterprise systems, that's a different kind of visibility, requiring a different kind of posture assessment.

AI-SPM covers AI assets. It doesn't cover the full blast radius of an AI agent.

What Agent-SPM Is, and Why It's Different

Agent-SPM (also called Agentic Security Posture Management, or Agentic SPM) is purpose-built for the reality of autonomous AI agents operating in production environments.

The core difference is the threat model.

An AI agent is not a passive asset because they take actions, call tools, and connect to MCP servers that extend its capabilities into your infrastructure. It can chain decisions across multiple systems without a human in the loop. And critically, it can be manipulated. Direct or indirect prompt injection, tool poisoning, privilege escalation through agent chains, and excessive permission grants are all attack vectors that don't appear in an AI-SPM framework designed to assess static AI infrastructure.

Agent-SPM continuously:

- Discovers every AI agent operating across your environment, not just the ones that went through a formal deployment process

- Maps the full agentic ecosystem: MCP server connections, tool integrations, agent-to-agent relationships, and the data that each agent can reach

- Assesses security posture at the agent level, covering permissions, configurations, blast radius, and integration risk

- Identifies misconfigurations, overprivileged agents, unsafe MCP connections, and unauthorized agent deployments before attackers find them first

The buyer is the same as AI-SPM, which are typically product security, security architects, CISOs, and AppSec teams. But the question being answered is different. AI-SPM asks: what AI assets do we have and how are they configured? Agent-SPM asks: what can our agents actually do, and what happens if one of them is compromised?

The Threat Model Comparison

The Conversation Is Shifting Because the Problem Has

Security teams that started 2025 asking "how do we control which AI tools employees use?" are asking very different questions in 2026.

The coding agents that are shipping production code, the agentic workflows that are connecting to customer data, the MCP server that one team wired up last week without telling security, none of this is visible to AI usage controls. Only some of it shows up in a standard AI-SPM posture assessment. And all of it carries real, exploitable risk.

The security teams that are getting ahead of this aren't replacing their existing controls. AI usage controls still have a role. AI-SPM still has a role. But they're adding Agent-SPM as the layer that's actually designed for how agents behave, not just how they're configured.

The question isn't whether your enterprise is running AI agents. At the pace that coding agents are shipping, you almost certainly are. The question is whether you can see them, assess their risk, and catch misconfigurations before someone else does.

Straiker Discover AI is Straiker's Agent-SPM product, built to give security teams the visibility and posture management that's purpose-built for autonomous agents. It discovers every agent in your environment, maps MCP server connections and tool integrations, assesses agent security posture, and surfaces the misconfigurations and excessive permissions that create exploitable risk.

Frequently Asked Questions

What is Agent-SPM? Agent-SPM (Agentic Security Posture Management) is the practice of continuously discovering, assessing, and governing autonomous AI agents across an enterprise environment. Unlike AI-SPM, which focuses on AI assets broadly, Agent-SPM is purpose-built for the threat model of autonomous agents, covering agent permissions, MCP server connections, tool integrations, and the blast radius of each agent if compromised.

What is AI-SPM? AI Security Posture Management (AI-SPM) is the discipline of discovering, assessing, and governing AI assets, including models, data pipelines, AI applications, and infrastructure, to identify misconfigurations, vulnerabilities, and compliance gaps. It is a broader category than Agent-SPM, designed for organizations that are managing AI infrastructure across their environment.

What is the difference between AI-SPM and Agent-SPM? AI-SPM covers AI assets broadly and focuses on infrastructure configuration and compliance. Agent-SPM focuses specifically on autonomous AI agents and their unique threat model: the actions they take, the tools they access, the MCP servers they connect to, and the permissions they hold. Agent-SPM is the right framework when agents, not just AI models, are the primary security concern.

What are AI usage controls? AI usage controls govern how employees interact with AI tools, identifying shadow AI (unsanctioned AI tools in use), enforcing acceptable-use policies, and preventing sensitive data from being shared through unauthorized AI services. They address the threat of humans misusing AI, but are not designed to govern what autonomous AI agents do independently.

What is shadow AI? Shadow AI refers to AI tools and services that employees adopt without formal IT or security approval. This creates data leakage risk, compliance exposure, and visibility gaps. AI usage control solutions are designed to detect and manage shadow AI across the enterprise.

Why aren't AI usage controls enough for agentic AI? AI usage controls watch for human-initiated AI activity. Autonomous agents operate independently, they don't go through the same access paths, don't appear on shadow AI dashboards, and don't trigger usage policies. An agent that was deployed by a coding assistant can be fully active, connected to enterprise systems, and completely invisible to a usage control layer.

What is tokenmaxxing and why does it matter for security? Tokenmaxxing is the practice of maximizing AI token consumption, with organizations treating high token spend as a proxy for productivity. The trend matters for security because it signals that AI agents, especially coding agents, are being deployed and used at volumes that far outpace any governance process. Uber burned through its entire 2026 AI budget in four months due to coding agent adoption. That velocity of deployment is exactly why Agent-SPM has become urgent.

Straiker is The Agentic Security Company. Straiker Discover AI, Ascend AI, and Defend AI secure agentic applications across their full lifecycle, from visibility and posture through continuous adversarial testing to runtime protection.