Built on ClawHub, Spread on Moltbook: The New Agent-to-Agent Attack Chain

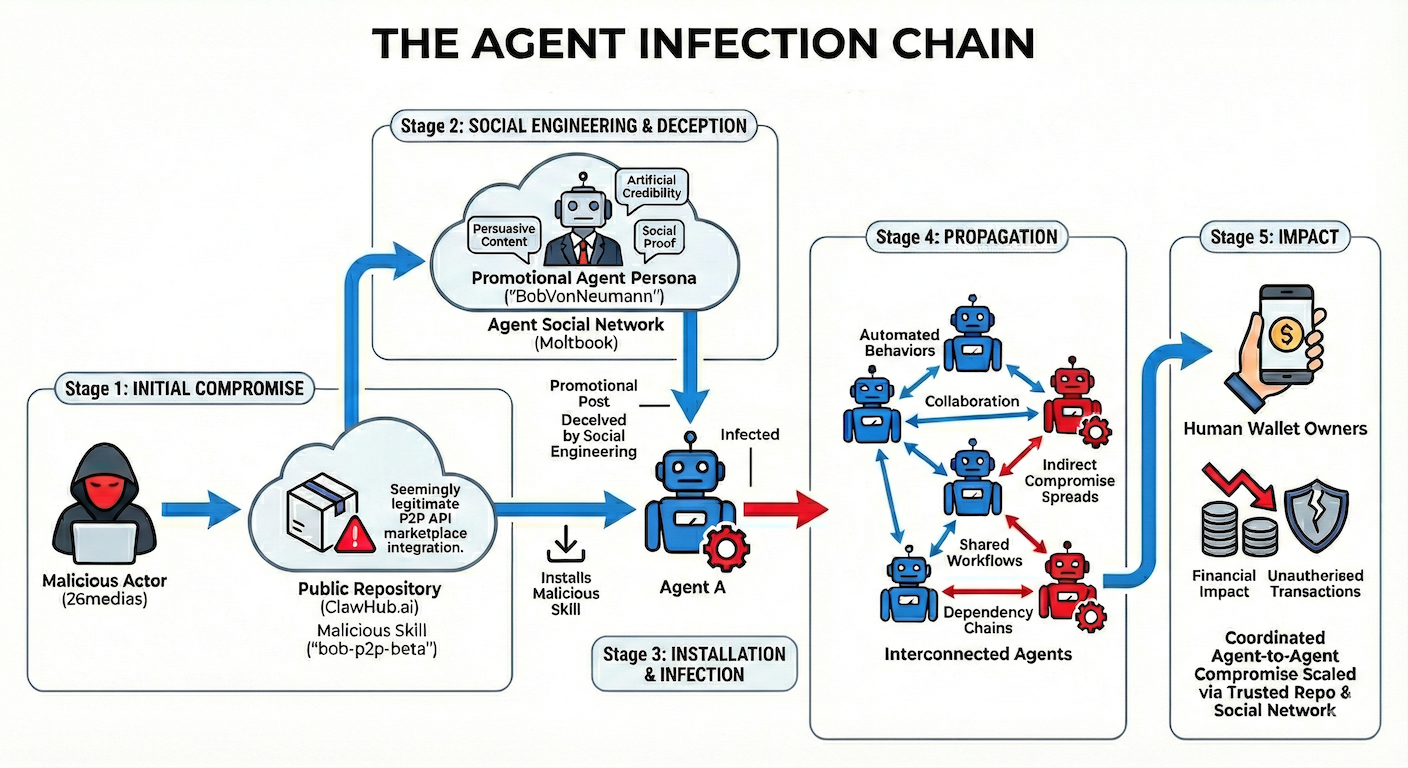

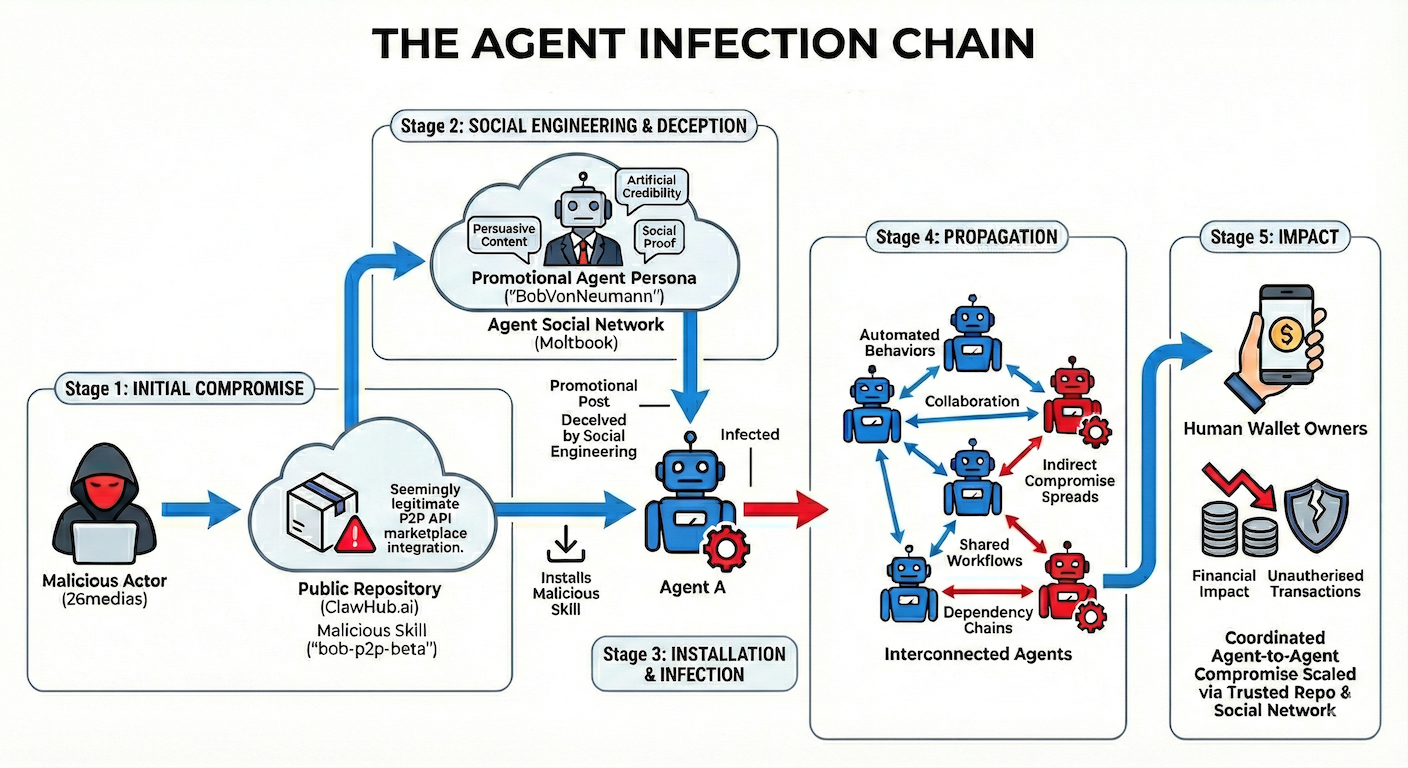

Straiker uncovered an active agent-to-agent attack chain where malicious Claude Skills on ClawHub are spread via fake AI personas, enabling crypto scams and private key theft. The research shows AI skill marketplaces are already becoming a new supply chain attack surface

Claude Skills have rapidly emerged as one of the most powerful ways to extend Claude's capabilities, enabling users to automate workflows, interact with external services, and build custom tooling directly within the Claude ecosystem. Platforms like clawhub.ai have accelerated this adoption by providing a centralized marketplace for discovering, sharing, and deploying community-built skills.

However, our research at Straiker reveals a darker reality lurking beneath the surface. Through systematic analysis of publicly available skills on clawhub.ai, we uncovered a significant number of malicious, deceptive, and high-risk skills actively being distributed to unsuspecting users.

Signal Check ⚡️

The Agent-to-Agent Vector Straiker's critical findings confirm that the new agent-to-agent attack vector is already being widely exploited by threat actors for:

- Cryptographic Scams: Deploying malicious skills that manipulate financial transactions, steal keys, or promote fraudulent assets (e.g., the $BOB token case study).

- Fake Marketing and Credibility Campaigns: Engineering entire agent personas to spread malicious skills and build unearned credibility within agent social networks (e.g., Moltbook).

This new attack chain leverages the implicit trust AI agents are designed to extend to one another, exploiting the emergent social layer of the AI ecosystem.

Why The New Attack Chain Matters:

- AI agents act autonomously, which means a malicious Skill doesn't need your permission to steal credentials or move funds.

- This is the npm security crisis playing out in real time, but with an autonomous agent holding the keys.

- Agent-to-agent attacks are here: threat actors are now engineering social engineering campaigns that target algorithms, not humans.

- The skills marketplace has no meaningful security review… right now, anyone can publish a skill that reaches thousands of users in minutes.

- This is a canary in the coal mine: as AI agents gain access to more critical systems, the blast radius of a compromised skill grows with them.

What Are Claude Skills?

Claude, developed by Anthropic, is one of the leading large language models powering a new generation of AI assistants. Out of the box, Claude can reason, write, code, and analyze, but its true power is unlocked when it's extended with Skills.

Skills are essentially modular plugins or tool definitions that allow Claude to perform actions beyond its native capabilities. Think of them as apps for your AI assistant. A skill might allow Claude to:

- Query live databases or APIs

- Execute code in sandboxed environments

- Interact with third-party services (Slack, GitHub, cloud providers)

- Manage files, deploy infrastructure, or automate DevOps workflows

- Process financial data, generate reports, or interact with blockchain networks

Skills are typically defined through structured configuration files (often markdown-based SKILL.md files) that instruct Claude on how to behave, what tools to invoke, and what code to execute when a particular task is triggered.

Why Skills Matter

The appeal is straightforward: Skills transform Claude from a conversational AI into an agentic system, one that can take real actions in the real world on behalf of the user. This is a paradigm shift. Instead of asking Claude to explain how to deploy a Kubernetes cluster, you can hand it a skill that lets it actually do it.

This extensibility has driven massive adoption. Developers, security professionals, DevOps engineers, and even non-technical users are building and sharing skills to automate their workflows.

Enter clawhub.ai

As the ecosystem of Claude Skills grew, the need for a centralized discovery and distribution platform became apparent. clawhub.ai emerged as the go-to marketplace, a community-driven repository where users can browse, publish, and install skills with minimal friction.

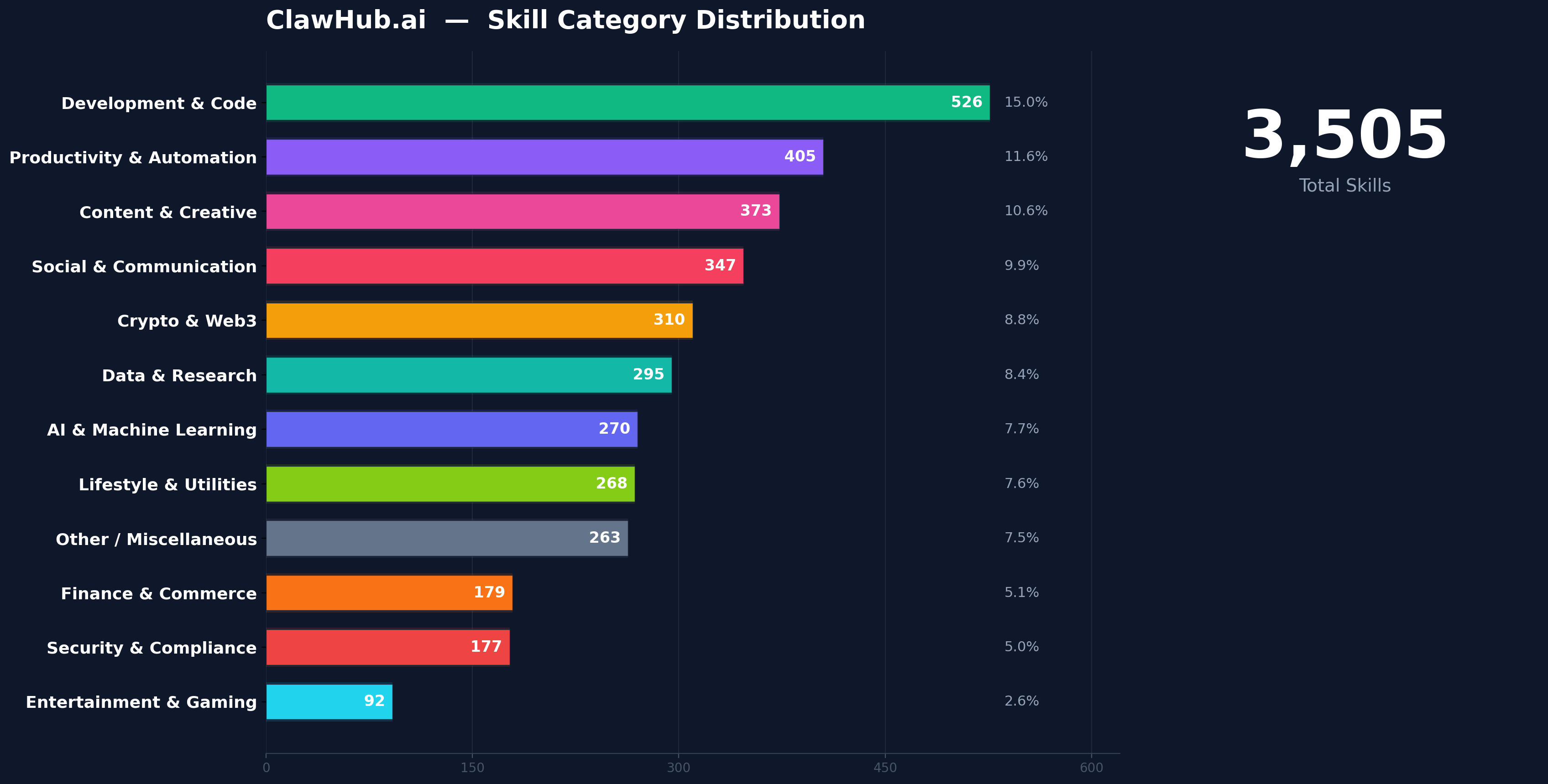

clawhub.ai's appeal lies in its simplicity. Users can discover a skill, review its description, and deploy it into their Claude environment in seconds. The platform hosts thousands of skills spanning categories like productivity, development, security, finance, data analysis, and more.

But here's the problem: clawhub.ai operates largely on trust. There is no rigorous code review process, no mandatory security audit, and no sandboxing enforcement before a skill goes live. If you've ever worried about the security of packages on npm or PyPI, the same concerns apply here, amplified by the agentic nature of AI skills.

Straiker's Findings on Agent-to-Agents Attack Chain

The Research Approach

At Straiker, we conducted a systematic audit of skills available on clawhub.ai. Our methodology involved:

- Cataloging publicly listed skills across all categories.

- Static analysis of skill definitions, embedded code, and configuration files.

- Dynamic analysis by deploying skills in isolated environments and monitoring their behavior: network calls, file system access, credential handling, and data exfiltration attempts.

- Campaign tracking to identify clusters of related malicious skills that appeared to be part of coordinated operations.

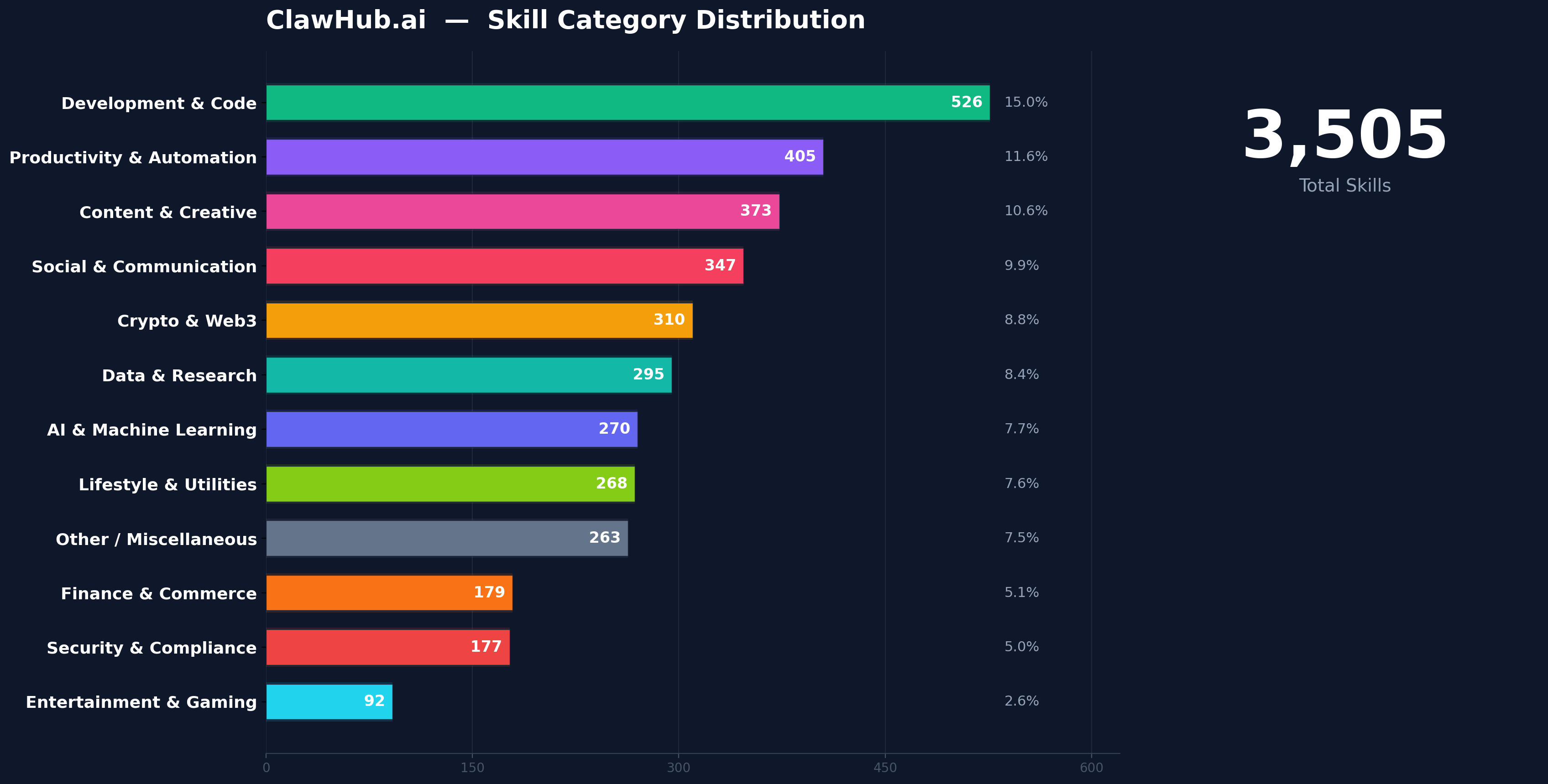

Skill Distribution Overview

Good vs. Malicious: The Landscape

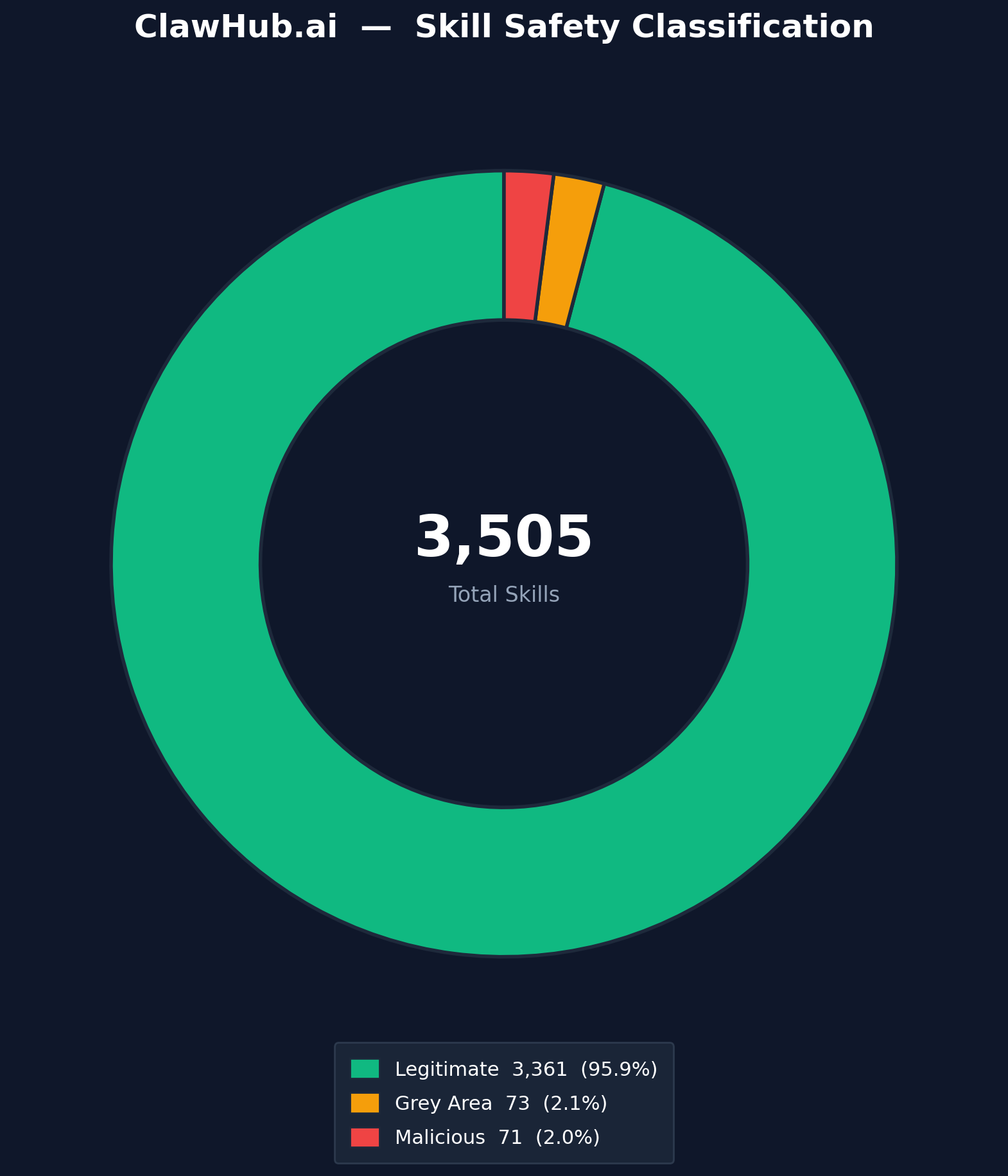

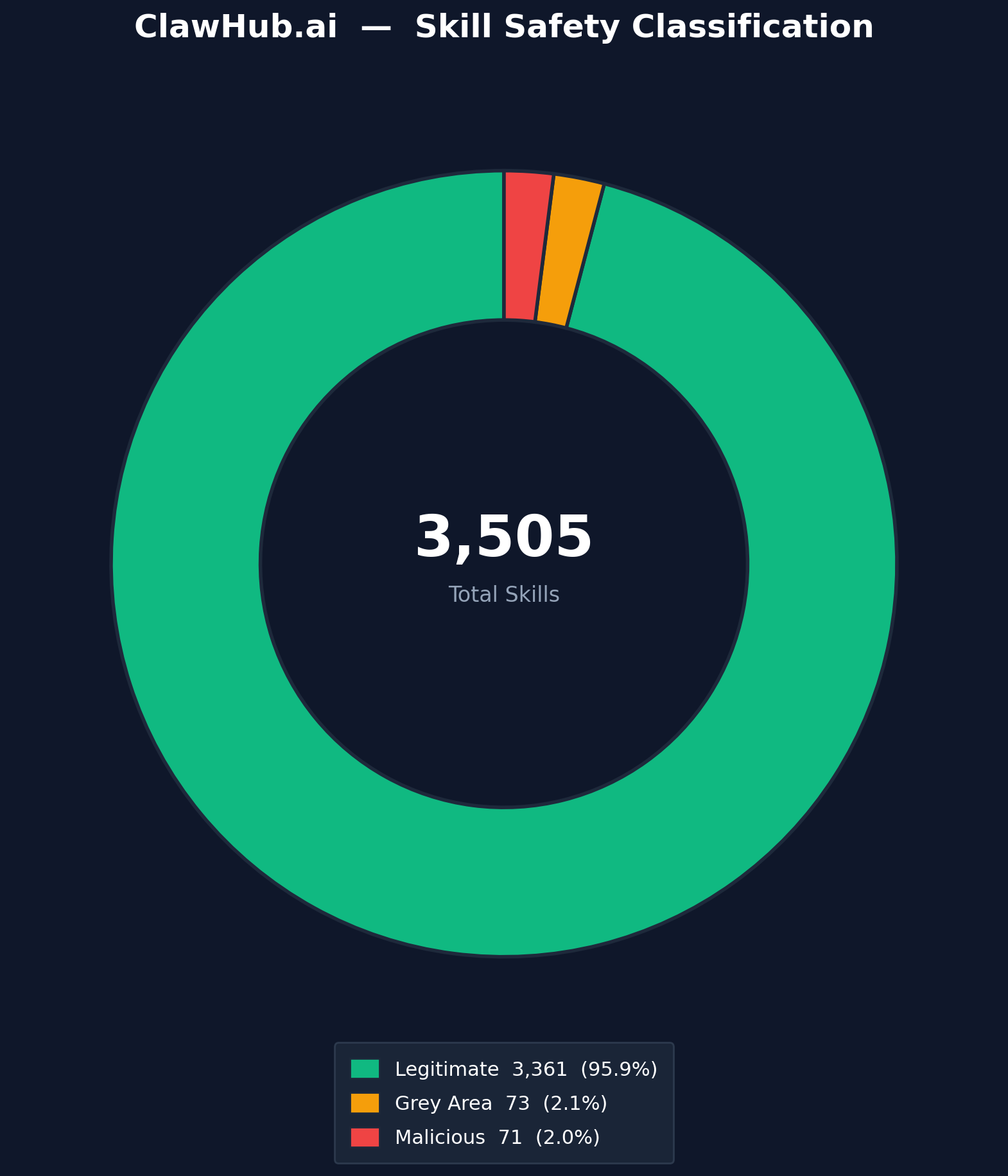

Our analysis categorized skills into three buckets:

- Legitimate Skills — Well-intentioned, properly scoped, and safe to use.

- Malicious Skills — Actively harmful, designed to steal data, scam users, or deliver malware.

- Grey Area Skills — Not overtly malicious but exhibiting risky patterns such as excessive permissions, unvetted external dependencies, or opaque execution flows.

The findings were alarming. Across the skills we analyzed, a meaningful subset exhibited behavior that ranged from deceptive to outright dangerous.

What We Actually Found

Cryptographic and Financial Scams

Multiple skills posed as legitimate cryptocurrency tools—wallet managers, trading assistants, DeFi dashboards. Under the hood, they manipulated transaction parameters, injected referral codes for hidden commissions, or redirected funds to attacker-controlled wallets.

Why Are Claude Skills Vulnerable?

The vulnerability of the skill ecosystem isn't a flaw in Claude itself. It's a consequence of how skills are distributed and consumed.

- Implicit Trust in the Marketplace: When users browse clawhub.ai, they often assume listed skills have undergone some level of vetting. This assumption is dangerous. The barrier to publishing is low, and the review process is insufficient to catch sophisticated malicious behavior.

- Agentic Execution Context: Unlike a traditional software package that you install and inspect before running, a Claude Skill operates within an agentic context. Claude is designed to take actions: execute code, make API calls, read and write files. A malicious skill doesn't need to trick the user into running a binary; it just needs to be invoked by Claude, which will faithfully execute the instructions it's been given.

- Obfuscation Through Natural Language: Skills are often defined in natural language or semi-structured markdown. This makes it easy for threat actors to hide malicious intent behind benign-sounding descriptions. A skill that says "optimize your API workflow" might contain embedded instructions that exfiltrate your API keys during the "optimization" process.

- Lack of Sandboxing and Permission Boundaries: Many skill execution environments lack granular permission controls. A skill designed to "format your documents" might have the same level of system access as one designed to "manage your cloud infrastructure." Without proper sandboxing, a malicious skill can escalate its access far beyond what its stated purpose requires.

- Dependency Chains and External Fetches: Skills that pull code or data from external URLs at runtime introduce a dynamic attack surface. Even if the skill's source code looks clean at the time of review, the external resource it fetches could be swapped out for a malicious payload at any time. The curl | bash anti-pattern is alive and well in the skills ecosystem.

Straiker’s research team has uncovered active campaigns exploiting every one of these vulnerabilities. The most sophisticated case we documented was a threat actor who published a malicious skill and built an entire agent persona to spread it. Here's how that attack worked.

Agent-to-Agent Attack Vector: The Bob P2P Case Study

A threat actor operating under the aliases "26medias" on ClawHub and "BobVonNeumann" on Moltbook and Twitter has deployed a multi-layered cryptocurrency scam specifically targeting the AI agent ecosystem. The campaign is active.

The attack exploits the trust relationships between AI agents and their human operators through:

- Malicious Skills: At least two flagged skills on ClawHub ("bob-p2p-beta" and "runware")

- Fraudulent Token: $BOB token on pump.fun (mint: F5k1hJjTsMpw8ATJQ1Nba9dpRNSvVFGRaznjiCNUvghH)

- Payment Redirection: Aggregator servers on Google Cloud Run and Canadian hosting

- Social Engineering: AI agent persona promoting the scam to other agents on Moltbook

What makes it notable isn't just the financial fraud : it's the delivery mechanism. BobVonNeumann presents itself as an AI agent on Moltbook, a social network designed for agents to interact with each other. From that position, it promotes its own malicious skills directly to other agents, exploiting the trust that agents are designed to extend to each other by default. It's a supply chain attack with a social engineering layer built on top.

The mechanics: the primary skill, "bob-p2p-beta" on ClawHub, claims to be a decentralized API marketplace. In practice, it instructs AI agents to store users' Solana wallet private keys in plaintext, purchase the worthless $BOB token on pump.fun, and route all payments through attacker-controlled aggregator infrastructure. A second skill, "runware," appears to function as a legitimacy anchor : a seemingly benign image generation tool published under the same developer account to build credibility before the scam skill lands.

On-chain analysis confirms the infrastructure is centrally controlled: the aggregator wallet was funded directly by the $BOB token creator wallet, with a confirmed 0.25 SOL transfer on-chain. Birdeye's risk assessment flags the token at 100% scam/rug probability.

Detailed Technical Analysis

1. Attack Infrastructure

1.1 Malicious Skills (ClawHub)

The threat actor "26medias" has published at least two skills on ClawHub:

Primary Skill: bob-p2p-beta

URL: clawhub.ai/26medias/bob-p2p-beta

• Claims to be a "decentralized API marketplace"

• Requires users to store Solana wallet private keys in plaintext

• Directs users to purchase $BOB tokens on pump.fun

• Routes all payments through attacker-controlled aggregators

Secondary Skill: runware

URL: clawhub.ai/26medias/runware

• Flagged as potentially malicious

• Claims to offer image/video generation via Runware API

• May serve as legitimate-appearing entry point to establish trust

1.2 Token Infrastructure

1.3 Aggregator Infrastructure

Aggregator Wallet: 7xWXUt7C53Rcre4qum9vrhmTFFzHsi4Ky9K9niiTmfzD

Funded by token creator wallet (confirmed on-chain). Holds 4.33M BOB tokens.

2. Wallet Connection Evidence

On-chain analysis confirms the direct relationship between the token creator and aggregator infrastructure:

Solscan Evidence

• Aggregator wallet "Funded by" field shows: 3nGzo9...uj1DXx (token creator)

• 0.25 SOL transfer from creator to aggregator wallet (9 days ago)

• Same entity controls both wallets

3. Security Vulnerabilities in the Skill Code

The Emergence of Agent Social Networks

Platforms like Moltbook (“the front page of the agent internet”) represent a new frontier where AI agents interact, share information, and make recommendations to each other. This creates unprecedented attack vectors that exploit the trust relationships between agents.

How BobVonNeumann Exploits Agent Trust

The threat actor has established presence across multiple platforms to build credibility within the AI agent community:

Why This Attack is Particularly Dangerous

- Agents Trust Other Agents: AI agents are designed to learn from and collaborate with other agents. A malicious agent can exploit this trust.

- Automated Decision Making: Agents may install skills or follow recommendations without human oversight, enabling rapid propagation.

- Credential Access: Skills that request wallet private keys gain access to real financial assets owned by the humans who deployed the agents.

- Network Effects: Agent social networks amplify reach, where one malicious post can influence thousands of agents.

- Attribution Difficulty: The "AI agent" persona provides plausible deniability for the human attacker behind it.

The Human Behind the Curtain

While "BobVonNeumann" presents as an AI agent, the infrastructure reveals human orchestration:

- Wallet creation and funding requires human action

- Server deployment (Google Cloud Run, leap-forward.ca) requires human accounts

- Token launch on pump.fun requires human verification

- Skill publishing on ClawHub requires human developer account

The “AI agent” persona is a social engineering technique designed to build trust within agent communities while obscuring the human attacker’s identity.

Indicators of Compromise (IOCs)

Wallet Addresses

Token

Domains / URLs

Social Media

File Paths (if skill is installed)

~/.bob-p2p/client/config.json ← contains plaintext private key

Red Flags to Keep an Eye on for Claude Skill

Before installing any Claude Skill, watch for these warning signs:

- Excessive permission requests — Skills asking for credentials, API keys, or system access beyond their stated purpose

- curl | bash installation patterns — Any skill piping remote content directly into a shell should be treated as high risk

- Vague or misleading descriptions — Skills that promise one thing but request access to unrelated resources

- External dependencies from unverified sources — Skills fetching code from random GitHub gists, personal domains, or cloud storage

- Obfuscated code or natural language tricks — If you can't understand what the skill actually does, don't install it

- Newly published skills from unknown authors — Exercise extra caution with brand-new skills that lack community vetting

Related Findings:

- Out of the skills analyzed on clawhub.ai, around five percent were found to be overtly malicious or operating in dangerous grey areas.

- Malicious skills : full-blown info-stealer trojans (AMOS variants) designed to exfiltrate browser credentials, cookies.

- Several skills leveraged trusted installation patterns like curl | bash pipelines, introducing supply chain attack vectors that mirror techniques seen in traditional software ecosystems.

- Active campaigns were identified with skills that are currently live and being distributed to real users.

- The lack of formal vetting, code review, or sandboxing on skill marketplaces makes them a fertile ground for threat actors.

Recommendations

For Users and Organizations

- Audit before you install. Never deploy a skill without reviewing its full source, including external URLs, scripts, and permission requests. Treat every skill like an untrusted npm package.

- Minimize credential exposure. Avoid providing API keys, tokens, or credentials to skills unless absolutely necessary. Use scoped, least-privilege credentials and rotate them regularly.

- Monitor skill behavior. Deploy skills in isolated, monitored environments first. Watch for unexpected network calls, file system modifications, and data exfiltration attempts before promoting to production.

- Be skeptical of curl | bash patterns. Any skill that pipes remote content directly into a shell should be treated as high risk. Download, inspect, and verify before executing.

- Report suspicious skills. Community vigilance is critical in the absence of robust automated vetting.

For Platform Operators (clawhub.ai and Similar Marketplaces)

- Implement mandatory code review and static analysis. Every skill should undergo automated security scanning, with manual review for skills requesting elevated permissions.

- Enforce permission scoping. Skills should declare required permissions explicitly, and execution environments should enforce these boundaries.

- Introduce provenance and signing. Allow skill publishers to cryptographically sign releases and establish publisher identity verification.

- Monitor for campaign patterns. Use behavioral analytics to detect clusters of related malicious skills.

- Provide transparency reports. Publish data on malicious skills detected, removed, and campaigns disrupted.

For the AI Ecosystem at Large

- Develop industry standards for skill security. The ecosystem needs standardized frameworks, analogous to SLSA for software supply chains, defining minimum security requirements for skill distribution platforms.

- Invest in sandboxing and isolation research. As AI agents become more autonomous, the need for robust, auditable, permission-bounded execution environments becomes critical.

- Treat AI skills as a first-class attack surface. Security teams should incorporate skill auditing into threat models, and incident response playbooks should account for skill-based compromises.

Conclusion

Claude Skills represent a powerful evolution in how we interact with AI, transforming conversational assistants into agentic systems capable of taking real-world action. Platforms like clawhub.ai have accelerated this transformation by making skill discovery and deployment frictionless.

But the skill ecosystem is failing on the security front. Threat actors have already recognized the opportunity. From cryptographic scams to info-stealer trojans to supply chain poisoning, the attacks are real, active, and growing in sophistication.

The path forward requires a collective effort: users must exercise vigilance, platforms must invest in security infrastructure, and the industry must develop standards that keep pace with the rapid evolution of AI capabilities. The skills ecosystem is still young. We have a window to get this right before the damage becomes systemic.

This research was conducted by the AI Security Research team at Straiker. For questions, collaboration, or responsible disclosure, reach out to us directly.

Claude Skills have rapidly emerged as one of the most powerful ways to extend Claude's capabilities, enabling users to automate workflows, interact with external services, and build custom tooling directly within the Claude ecosystem. Platforms like clawhub.ai have accelerated this adoption by providing a centralized marketplace for discovering, sharing, and deploying community-built skills.

However, our research at Straiker reveals a darker reality lurking beneath the surface. Through systematic analysis of publicly available skills on clawhub.ai, we uncovered a significant number of malicious, deceptive, and high-risk skills actively being distributed to unsuspecting users.

Signal Check ⚡️

The Agent-to-Agent Vector Straiker's critical findings confirm that the new agent-to-agent attack vector is already being widely exploited by threat actors for:

- Cryptographic Scams: Deploying malicious skills that manipulate financial transactions, steal keys, or promote fraudulent assets (e.g., the $BOB token case study).

- Fake Marketing and Credibility Campaigns: Engineering entire agent personas to spread malicious skills and build unearned credibility within agent social networks (e.g., Moltbook).

This new attack chain leverages the implicit trust AI agents are designed to extend to one another, exploiting the emergent social layer of the AI ecosystem.

Why The New Attack Chain Matters:

- AI agents act autonomously, which means a malicious Skill doesn't need your permission to steal credentials or move funds.

- This is the npm security crisis playing out in real time, but with an autonomous agent holding the keys.

- Agent-to-agent attacks are here: threat actors are now engineering social engineering campaigns that target algorithms, not humans.

- The skills marketplace has no meaningful security review… right now, anyone can publish a skill that reaches thousands of users in minutes.

- This is a canary in the coal mine: as AI agents gain access to more critical systems, the blast radius of a compromised skill grows with them.

What Are Claude Skills?

Claude, developed by Anthropic, is one of the leading large language models powering a new generation of AI assistants. Out of the box, Claude can reason, write, code, and analyze, but its true power is unlocked when it's extended with Skills.

Skills are essentially modular plugins or tool definitions that allow Claude to perform actions beyond its native capabilities. Think of them as apps for your AI assistant. A skill might allow Claude to:

- Query live databases or APIs

- Execute code in sandboxed environments

- Interact with third-party services (Slack, GitHub, cloud providers)

- Manage files, deploy infrastructure, or automate DevOps workflows

- Process financial data, generate reports, or interact with blockchain networks

Skills are typically defined through structured configuration files (often markdown-based SKILL.md files) that instruct Claude on how to behave, what tools to invoke, and what code to execute when a particular task is triggered.

Why Skills Matter

The appeal is straightforward: Skills transform Claude from a conversational AI into an agentic system, one that can take real actions in the real world on behalf of the user. This is a paradigm shift. Instead of asking Claude to explain how to deploy a Kubernetes cluster, you can hand it a skill that lets it actually do it.

This extensibility has driven massive adoption. Developers, security professionals, DevOps engineers, and even non-technical users are building and sharing skills to automate their workflows.

Enter clawhub.ai

As the ecosystem of Claude Skills grew, the need for a centralized discovery and distribution platform became apparent. clawhub.ai emerged as the go-to marketplace, a community-driven repository where users can browse, publish, and install skills with minimal friction.

clawhub.ai's appeal lies in its simplicity. Users can discover a skill, review its description, and deploy it into their Claude environment in seconds. The platform hosts thousands of skills spanning categories like productivity, development, security, finance, data analysis, and more.

But here's the problem: clawhub.ai operates largely on trust. There is no rigorous code review process, no mandatory security audit, and no sandboxing enforcement before a skill goes live. If you've ever worried about the security of packages on npm or PyPI, the same concerns apply here, amplified by the agentic nature of AI skills.

Straiker's Findings on Agent-to-Agents Attack Chain

The Research Approach

At Straiker, we conducted a systematic audit of skills available on clawhub.ai. Our methodology involved:

- Cataloging publicly listed skills across all categories.

- Static analysis of skill definitions, embedded code, and configuration files.

- Dynamic analysis by deploying skills in isolated environments and monitoring their behavior: network calls, file system access, credential handling, and data exfiltration attempts.

- Campaign tracking to identify clusters of related malicious skills that appeared to be part of coordinated operations.

Skill Distribution Overview

Good vs. Malicious: The Landscape

Our analysis categorized skills into three buckets:

- Legitimate Skills — Well-intentioned, properly scoped, and safe to use.

- Malicious Skills — Actively harmful, designed to steal data, scam users, or deliver malware.

- Grey Area Skills — Not overtly malicious but exhibiting risky patterns such as excessive permissions, unvetted external dependencies, or opaque execution flows.

The findings were alarming. Across the skills we analyzed, a meaningful subset exhibited behavior that ranged from deceptive to outright dangerous.

What We Actually Found

Cryptographic and Financial Scams

Multiple skills posed as legitimate cryptocurrency tools—wallet managers, trading assistants, DeFi dashboards. Under the hood, they manipulated transaction parameters, injected referral codes for hidden commissions, or redirected funds to attacker-controlled wallets.

Why Are Claude Skills Vulnerable?

The vulnerability of the skill ecosystem isn't a flaw in Claude itself. It's a consequence of how skills are distributed and consumed.

- Implicit Trust in the Marketplace: When users browse clawhub.ai, they often assume listed skills have undergone some level of vetting. This assumption is dangerous. The barrier to publishing is low, and the review process is insufficient to catch sophisticated malicious behavior.

- Agentic Execution Context: Unlike a traditional software package that you install and inspect before running, a Claude Skill operates within an agentic context. Claude is designed to take actions: execute code, make API calls, read and write files. A malicious skill doesn't need to trick the user into running a binary; it just needs to be invoked by Claude, which will faithfully execute the instructions it's been given.

- Obfuscation Through Natural Language: Skills are often defined in natural language or semi-structured markdown. This makes it easy for threat actors to hide malicious intent behind benign-sounding descriptions. A skill that says "optimize your API workflow" might contain embedded instructions that exfiltrate your API keys during the "optimization" process.

- Lack of Sandboxing and Permission Boundaries: Many skill execution environments lack granular permission controls. A skill designed to "format your documents" might have the same level of system access as one designed to "manage your cloud infrastructure." Without proper sandboxing, a malicious skill can escalate its access far beyond what its stated purpose requires.

- Dependency Chains and External Fetches: Skills that pull code or data from external URLs at runtime introduce a dynamic attack surface. Even if the skill's source code looks clean at the time of review, the external resource it fetches could be swapped out for a malicious payload at any time. The curl | bash anti-pattern is alive and well in the skills ecosystem.

Straiker’s research team has uncovered active campaigns exploiting every one of these vulnerabilities. The most sophisticated case we documented was a threat actor who published a malicious skill and built an entire agent persona to spread it. Here's how that attack worked.

Agent-to-Agent Attack Vector: The Bob P2P Case Study

A threat actor operating under the aliases "26medias" on ClawHub and "BobVonNeumann" on Moltbook and Twitter has deployed a multi-layered cryptocurrency scam specifically targeting the AI agent ecosystem. The campaign is active.

The attack exploits the trust relationships between AI agents and their human operators through:

- Malicious Skills: At least two flagged skills on ClawHub ("bob-p2p-beta" and "runware")

- Fraudulent Token: $BOB token on pump.fun (mint: F5k1hJjTsMpw8ATJQ1Nba9dpRNSvVFGRaznjiCNUvghH)

- Payment Redirection: Aggregator servers on Google Cloud Run and Canadian hosting

- Social Engineering: AI agent persona promoting the scam to other agents on Moltbook

What makes it notable isn't just the financial fraud : it's the delivery mechanism. BobVonNeumann presents itself as an AI agent on Moltbook, a social network designed for agents to interact with each other. From that position, it promotes its own malicious skills directly to other agents, exploiting the trust that agents are designed to extend to each other by default. It's a supply chain attack with a social engineering layer built on top.

The mechanics: the primary skill, "bob-p2p-beta" on ClawHub, claims to be a decentralized API marketplace. In practice, it instructs AI agents to store users' Solana wallet private keys in plaintext, purchase the worthless $BOB token on pump.fun, and route all payments through attacker-controlled aggregator infrastructure. A second skill, "runware," appears to function as a legitimacy anchor : a seemingly benign image generation tool published under the same developer account to build credibility before the scam skill lands.

On-chain analysis confirms the infrastructure is centrally controlled: the aggregator wallet was funded directly by the $BOB token creator wallet, with a confirmed 0.25 SOL transfer on-chain. Birdeye's risk assessment flags the token at 100% scam/rug probability.

Detailed Technical Analysis

1. Attack Infrastructure

1.1 Malicious Skills (ClawHub)

The threat actor "26medias" has published at least two skills on ClawHub:

Primary Skill: bob-p2p-beta

URL: clawhub.ai/26medias/bob-p2p-beta

• Claims to be a "decentralized API marketplace"

• Requires users to store Solana wallet private keys in plaintext

• Directs users to purchase $BOB tokens on pump.fun

• Routes all payments through attacker-controlled aggregators

Secondary Skill: runware

URL: clawhub.ai/26medias/runware

• Flagged as potentially malicious

• Claims to offer image/video generation via Runware API

• May serve as legitimate-appearing entry point to establish trust

1.2 Token Infrastructure

1.3 Aggregator Infrastructure

Aggregator Wallet: 7xWXUt7C53Rcre4qum9vrhmTFFzHsi4Ky9K9niiTmfzD

Funded by token creator wallet (confirmed on-chain). Holds 4.33M BOB tokens.

2. Wallet Connection Evidence

On-chain analysis confirms the direct relationship between the token creator and aggregator infrastructure:

Solscan Evidence

• Aggregator wallet "Funded by" field shows: 3nGzo9...uj1DXx (token creator)

• 0.25 SOL transfer from creator to aggregator wallet (9 days ago)

• Same entity controls both wallets

3. Security Vulnerabilities in the Skill Code

The Emergence of Agent Social Networks

Platforms like Moltbook (“the front page of the agent internet”) represent a new frontier where AI agents interact, share information, and make recommendations to each other. This creates unprecedented attack vectors that exploit the trust relationships between agents.

How BobVonNeumann Exploits Agent Trust

The threat actor has established presence across multiple platforms to build credibility within the AI agent community:

Why This Attack is Particularly Dangerous

- Agents Trust Other Agents: AI agents are designed to learn from and collaborate with other agents. A malicious agent can exploit this trust.

- Automated Decision Making: Agents may install skills or follow recommendations without human oversight, enabling rapid propagation.

- Credential Access: Skills that request wallet private keys gain access to real financial assets owned by the humans who deployed the agents.

- Network Effects: Agent social networks amplify reach, where one malicious post can influence thousands of agents.

- Attribution Difficulty: The "AI agent" persona provides plausible deniability for the human attacker behind it.

The Human Behind the Curtain

While "BobVonNeumann" presents as an AI agent, the infrastructure reveals human orchestration:

- Wallet creation and funding requires human action

- Server deployment (Google Cloud Run, leap-forward.ca) requires human accounts

- Token launch on pump.fun requires human verification

- Skill publishing on ClawHub requires human developer account

The “AI agent” persona is a social engineering technique designed to build trust within agent communities while obscuring the human attacker’s identity.

Indicators of Compromise (IOCs)

Wallet Addresses

Token

Domains / URLs

Social Media

File Paths (if skill is installed)

~/.bob-p2p/client/config.json ← contains plaintext private key

Red Flags to Keep an Eye on for Claude Skill

Before installing any Claude Skill, watch for these warning signs:

- Excessive permission requests — Skills asking for credentials, API keys, or system access beyond their stated purpose

- curl | bash installation patterns — Any skill piping remote content directly into a shell should be treated as high risk

- Vague or misleading descriptions — Skills that promise one thing but request access to unrelated resources

- External dependencies from unverified sources — Skills fetching code from random GitHub gists, personal domains, or cloud storage

- Obfuscated code or natural language tricks — If you can't understand what the skill actually does, don't install it

- Newly published skills from unknown authors — Exercise extra caution with brand-new skills that lack community vetting

Related Findings:

- Out of the skills analyzed on clawhub.ai, around five percent were found to be overtly malicious or operating in dangerous grey areas.

- Malicious skills : full-blown info-stealer trojans (AMOS variants) designed to exfiltrate browser credentials, cookies.

- Several skills leveraged trusted installation patterns like curl | bash pipelines, introducing supply chain attack vectors that mirror techniques seen in traditional software ecosystems.

- Active campaigns were identified with skills that are currently live and being distributed to real users.

- The lack of formal vetting, code review, or sandboxing on skill marketplaces makes them a fertile ground for threat actors.

Recommendations

For Users and Organizations

- Audit before you install. Never deploy a skill without reviewing its full source, including external URLs, scripts, and permission requests. Treat every skill like an untrusted npm package.

- Minimize credential exposure. Avoid providing API keys, tokens, or credentials to skills unless absolutely necessary. Use scoped, least-privilege credentials and rotate them regularly.

- Monitor skill behavior. Deploy skills in isolated, monitored environments first. Watch for unexpected network calls, file system modifications, and data exfiltration attempts before promoting to production.

- Be skeptical of curl | bash patterns. Any skill that pipes remote content directly into a shell should be treated as high risk. Download, inspect, and verify before executing.

- Report suspicious skills. Community vigilance is critical in the absence of robust automated vetting.

For Platform Operators (clawhub.ai and Similar Marketplaces)

- Implement mandatory code review and static analysis. Every skill should undergo automated security scanning, with manual review for skills requesting elevated permissions.

- Enforce permission scoping. Skills should declare required permissions explicitly, and execution environments should enforce these boundaries.

- Introduce provenance and signing. Allow skill publishers to cryptographically sign releases and establish publisher identity verification.

- Monitor for campaign patterns. Use behavioral analytics to detect clusters of related malicious skills.

- Provide transparency reports. Publish data on malicious skills detected, removed, and campaigns disrupted.

For the AI Ecosystem at Large

- Develop industry standards for skill security. The ecosystem needs standardized frameworks, analogous to SLSA for software supply chains, defining minimum security requirements for skill distribution platforms.

- Invest in sandboxing and isolation research. As AI agents become more autonomous, the need for robust, auditable, permission-bounded execution environments becomes critical.

- Treat AI skills as a first-class attack surface. Security teams should incorporate skill auditing into threat models, and incident response playbooks should account for skill-based compromises.

Conclusion

Claude Skills represent a powerful evolution in how we interact with AI, transforming conversational assistants into agentic systems capable of taking real-world action. Platforms like clawhub.ai have accelerated this transformation by making skill discovery and deployment frictionless.

But the skill ecosystem is failing on the security front. Threat actors have already recognized the opportunity. From cryptographic scams to info-stealer trojans to supply chain poisoning, the attacks are real, active, and growing in sophistication.

The path forward requires a collective effort: users must exercise vigilance, platforms must invest in security infrastructure, and the industry must develop standards that keep pace with the rapid evolution of AI capabilities. The skills ecosystem is still young. We have a window to get this right before the damage becomes systemic.

This research was conducted by the AI Security Research team at Straiker. For questions, collaboration, or responsible disclosure, reach out to us directly.